These posts are getting longer! Today I discuss expected value calculations – what they are, why they’re important for making a good difference on balance…and some further reading if you already knew all of this.

“Can I avoid causing bad things to happen?”

Everything we do has both good and bad consequences. I give money to a homeless woman – she is grateful but her friend down the road feels jealous. I lose my temper with a friend – he feels hurt but it also makes him more emotionally resilient. I go for a run – the exercise improves my health but also makes my legs hurt. Even doing something with very good consequences like donating to our top charity, the Against Malaria Foundation, is bound to also have some bad consequences somewhere down the line – perhaps bed nets block a welcome breeze for a lot of people.

Clearly it would be foolish to live our lives trying to completely avoid causing anything bad to happen. Rather, I think that we should try to make sure that our actions have good consequences on balance. A popular framework in the effective altruism movement for working out the extent to which good consequences outweigh bad consequences, or vice versa, is that of “expected value calculations”. The concept of expected value is an important one for effective altruists for reasons I will go into below.

“How do I calculate expected value?”

The expected value of an action is basically the value of that action once you have taken uncertainty about the outcome into account. It is calculated by adding up the value of each possible consequence of the action, after you’ve multiplied each one by the chance of that consequence occurring.

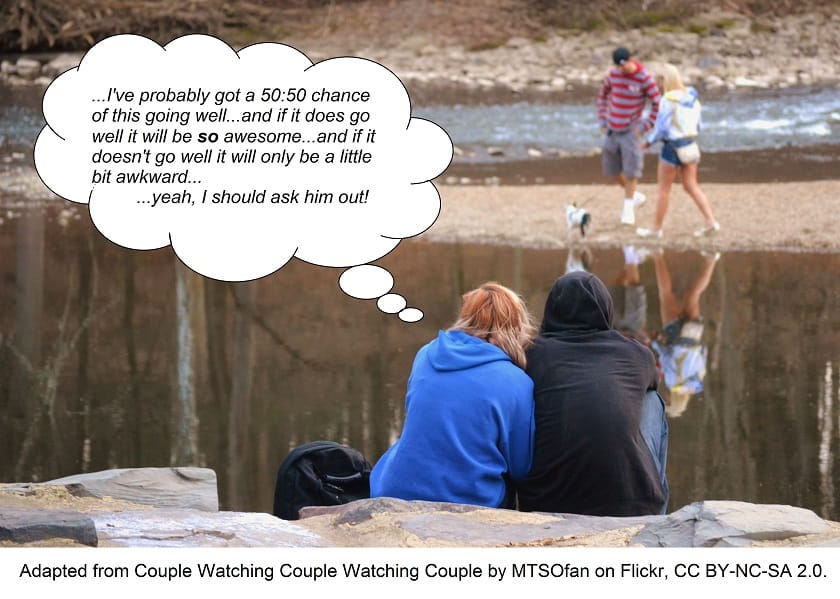

This might sound a bit complicated, but it’s the kind of thing we work out roughly in our heads all the time. Let’s say you are considering the action of asking someone out on a date. You predict that there’s roughly a 50% chance they’ll say “No”, and if they do, that will mean you will both feel a bit embarrassed – let’s give that a value of -10. But that -10 doesn’t actually count for much because there’s only a 50% chance of it occurring; in fact, it counts for 50% of -10, which is only -5. Now let’s not forget the 50% chance that they’ll say “Yes”. If they do say “Yes”, you reckon that will make you both much happier than you are currently – about twenty times more happiness than the sadness you’d both experience if they said “No” – so the value of this consequence is 200 (that is, 20 x 10). Remember to half the figure because there is only a 50% chance of this consequence happening, and you get a value of 100. Now all you need to do is add the two outcomes together: 100 – 5 = 95. Wonderful, a positive number! The expected value of asking this person on a date is positive – the good outweighs the bad. Go for it.

Of course, you can go more fine-grained than this, splitting the consequence “They say ‘Yes’” into “They say ‘Yes’ and we end up being really compatible” and “They say ‘Yes’ and we end up not being really compatible”, but I hope my example illustrates the basic method.

Of course, you can go more fine-grained than this, splitting the consequence “They say ‘Yes’” into “They say ‘Yes’ and we end up being really compatible” and “They say ‘Yes’ and we end up not being really compatible”, but I hope my example illustrates the basic method.

Let’s now think about the “ethical banker” that I referred to in my previous post. Would you do more harm than good by becoming a banker and donating a large chunk of your salary to effective charities? 80,000 Hours says “No” in their article Show me the harm, arguing that to claim otherwise “would imply that finance is doing something as bad as causing all the deaths in the world”. I’d be interested to hear your take on the matter in the comments below!

“Why bother calculating expected value?”

If we can do this sort of thing in our heads very quickly, why am I bothering to tell you about the explicit calculation? Three reasons come to mind:

-

- The expected value framework is particularly useful for effective altruists because effective altruists tend to be comfortable with taking financial risks (at least once they have secured enough to ensure themselves a moderately comfortable life). However, most people, given two options with similar expected value, would prefer the less risky option – if offered $10,000 for certain or a 50:50 gamble of getting $0 or $21,000, most people would choose the “safe” option and take the $10,000. This is because it is usually the case that the more dollars you already have, the less valuable each additional dollar is. But for effective altruists, every extra $10,000 they have to give away is still roughly five lives saved no matter how much they’ve already gained and given, making expected value a good guide for action.

- Doing these calculations properly helps to uncover any cognitive biases at play in our thinking, so it can be worth the trouble for big, important decisions. The mind is a messy place, and the quick judgement calls that happen in your head often mix in various biases without you noticing. If laying out the calculation gives you one answer and your “intuition” says another, that might be because your intuition has some inbuilt biases as well as making use of a rough expected value calculation. I think that calculating expected value is a particularly good way to avoid scope neglect.

- Explicitly calculating expected value can help us decide what to do in unfamiliar situations that our intuitions are particularly untrained at dealing with. For example, the human mind has had little experience with making decisions that affect the far future or large numbers of people today, because it is only recently that a large proportion of the human population has gained this power.

“I already know how to calculate expected value.”

Those of you already familiar with probability theory might be interested in reading more about:

-

- Dealing with huge numbers of unknown possible consequences by rewarding actions that have generally positive effects at utilitarian-essays.com

- Penalizing weak evidence by adjusting towards one’s “Bayesian prior” in the context of charity cost-effectiveness at givewell.org

Look out for the final post in this series tomorrow on “Making the Most Difference”. For the first post in our Making a Difference 101 series, click here.